LLM (49 blogmarks)

← BlogmarksGoblins, gremlins, and raccoons. Oh my!

https://bsky.app/profile/emollick.bsky.social/post/3mkjwmbebr22pExcerpt of a recent addition to the Codex for GPT-5.5 system prompt:

Never talk about goblins, gremlins, raccoons, trolls, ogres, pigeons, or other animals or creatures unless it is absolutely and unambiguously relevant to the user’s query.

Project Glasswing: Securing critical software for the AI era

https://www.anthropic.com/glasswingMythos Preview has already found thousands of high-severity vulnerabilities, including some in every major operating system and web browser. Given the rate of AI progress, it will not be long before such capabilities proliferate, potentially beyond actors who are committed to deploying them safely. The fallout—for economies, public safety, and national security—could be severe. Project Glasswing is an urgent attempt to put these capabilities to work for defensive purposes.

The 2025 AI Engineering Reading List

https://www.latent.space/p/2025-papersThe people at latent.space have curated a list of ~50 papers across ten areas for AI engineers looking to dig into relevant research in the AI and LLM space in 2025.

Here we curate “required reads” for the AI engineer. Our design goals are:

- pick ~50 papers (~one1 a week for a year), optional extras. Arbitrary constraint.

- tell you why this paper matters instead of just name drop without helpful context

- be very practical for the AI Engineer; no time wasted on Attention is All You Need, bc 1) everyone else already starts there, 2) most won’t really need it at work

Funny to see that they completely side-step "Attention is All You Need". As foundational as that paper is, they consider it old news and not practical for engineers in 2025.

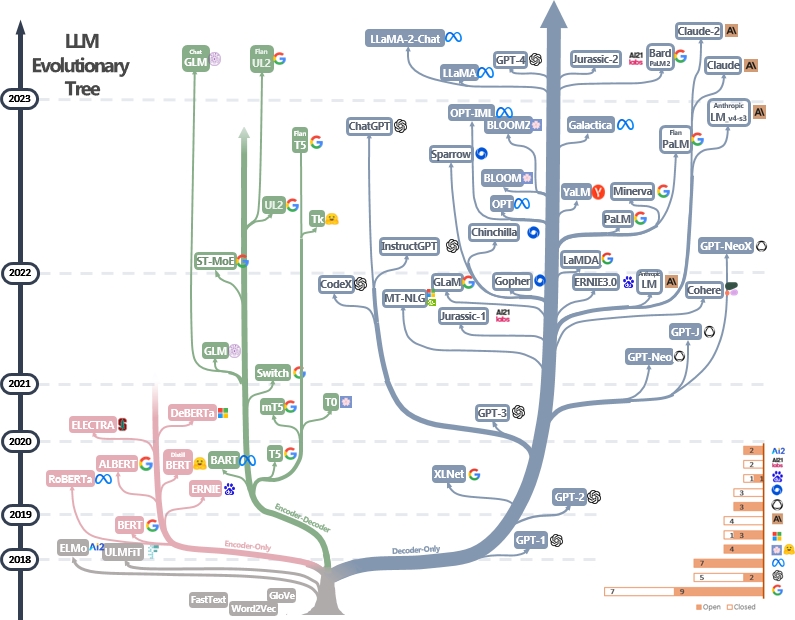

There is a repo LLM Practical Guide full of papers and an "LLM Tree" diagram that may have been a partial source of inspiration for the latent.space paper clubs. Unfortunately, this repo hasn't been updated since 2023. That said, there are still a lot of good resources in there.

This "LLM Tree" diagram is excellent showing the different branches of LLM tooling over time.

Ruby on Rails Edition of the Superpowers skills repo

https://github.com/lucianghinda/superpowers-rubyA Ruby on Rails–focused fork of obra/superpowers — a complete software development workflow for coding agents built on composable "skills".

I learned about this from Lucian Ghinda’s Linkedin post.

What is StepFun?

https://www.thewirechina.com/2025/06/08/what-is-stepfun/While looking at the OpenRouter AI Model Rankings, I noticed a model in the #2 spot that I had never heard of – Step 3.5 Flash (free) from StepFun. At this point I've heard the names of the major and not-so-major players in the AI space, but I'd never heard of StepFun.

I found the linked article covering the Shanghai-based AI company which was founded by Jiang Daxin, an ex-Microsoft employee.

Jiang told Chinese media last March that he chose to start his own business after U.S. firm OpenAI released ChatGPT in November 2022. “I thought, I can do it myself, maybe even better,” he said.

Here is a link to their web-based LLM chatbot if you're interested in giving their model(s) a try -- https://stepfun.ai/chats/new.

Benchmarks used to evaluate LLMs

AIME is the benchmark that I see most often mentioned in papers and blog posts.

Some mentioned in Jack Clark's latest ImportAI:

It tests these models across seven distinct benchmarks: AIME 2025, GSM8K, GPQA, HumanEval, BFCL, Arena-Hard, HealthBench-Easy.

From the DeepSeek-R1 paper:

We evaluate our models on MMLU (Hendrycks et al., 2021), MMLU-Redux (Gema et al., 2025), MMLU-Pro (Wang et al., 2024), C-Eval (Huang et al., 2023), and CMMLU (Li et al., 2024), IFEval (Zhou et al., 2023b), FRAMES (Krishna et al., 2024), GPQA Diamond (Rein et al., 2023), SimpleQA (OpenAI, 2024a), C-SimpleQA (He et al., 2024), SWE-Bench Verified (OpenAI, 2024b), Aider (Gauthier, 2025), LiveCodeBench (Jain et al., 2024) (2024-08 – 2025-01), Codeforces (Mirzayanov, 2025), Chinese National High School Mathematics Olympiad (CNMO 2024) (CMS, 2024), and American Invitational Mathematics Examination 2024 (AIME 2024) (MAA, 2024).

LLM Timeline

https://llmtimeline.web.app/There are so many things happening in the AI and LLM space and it is hard to keep track of it all, when it happened, who influenced a paper or model, what a paper or model influenced, etc. This "LLM Timeline" visualization does a nice job of that... up to early 2025. Unfortunately, this visualization misses out on a ton of developments since then including the November 2025 Inflection.

As someone who is fascinated by the research side of all of this, an awesome feature of this timeline is that you can click on a specific entity in the timeline and it includes several details and a link to the core white paper.

Everything in this visualization stems from the 2017 paper "Attention Is All You Need".

November 2025 Inflection

https://simonwillison.net/tags/november-2025-inflection/I've seen a lot of heads turn in the months since Opus 4.5 and GPT 5.1 were released. A lot of software developers going from "I use LLMs occassionaly" to "these models are good enough to use all the time."

As Simon Willison pointed out, this feels like a major inflection point in both the capabilities and adoption of LLMs:

November 2025 felt like an inflection point for coding agents, with Opus 4.5 and GPT 5.1 (and GPT-5.1 Codex) increasing the utility of those agents in a very noticeable way.

Transformer Explainer: LLM Transformer Model Visually Explained

https://poloclub.github.io/transformer-explainer/Transformer is the core architecture behind modern Al, powering models like ChatGPT and Gemini. Introduced in 2017, it revolutionized how Al processes information. The same architecture is used for training on massive datasets and for inference to generate outputs. Here we use GPT-2 (small), simpler than newer ones but perfect for learning the fundamentals.

This explainer provides a nice set of text and visuals going through how the Transformer architecture works.

Here is the "Attention Is All You Need" paper that ushered in all these advances in large language models.

the risk of AI side quests

https://bsky.app/profile/carnage4life.bsky.social/post/3mfytrcpux22qI've been in meetings where I've been asked to imagine a near-future in which members of the team who aren't currently producing code (e.g. project managers, C-suite, support team) are able to prompt an LLM blackbox with feature requests. Each of those requests will process in the background and eventually produce a preview environment for the prompter to look at and a PR to handoff to a software developer.

One of the assumptions baked in to the idea of this workflow is that there are all these well-defined, high-priority issues just sitting in the project management software waiting to be worked. Maybe that is the case in some orgs, however in my experience, the majority of teams and projects I've worked on don't have this. The backlog is a place where "nice-to-have" improvements and half-backed ideas collect dust and lose proximity to the state of the software system.

there’s a risk of AI side quests distracting from doubling down on the main one.

My hard-earned intuition for what works well with various LLM models and coding agents combined with my general expertise in software engineering combined with my knowledge of the specific codebase(s) are what allow me to get strong results from LLM tooling.

I suspect in a lot of cases I'd be tossing out the initial "engineer-out-of-the-loop" attempt and re-prompting from scratch, all while trying to keep tabs on the main quest work I have in progress.

Andrej Karpathy wrote a post recently that gets at why I think "asking an LLM to build that feature from the backlog" is not as straightforward as it seems:

It’s not perfect, it needs high-level direction, judgement, taste, oversight, iteration and hints and ideas. It works a lot better in some scenarios than others (e.g. especially for tasks that are well-specified and where you can verify/test functionality). The key is to build intuition to decompose the task just right to hand off the parts that work and help out around the edges.

I'd like to coin the term "AI-assisted Backlog Resurrection", but I don't think it's going to catch on.

Your codebase is NOT ready for AI

https://www.youtube.com/watch?v=uC44zFz7JSMTwo things:

- Coding agents operating on your codebase are much more powerful than a disconnected LLM chat session where you try to manually give whatever context you think is important.

- Many of our decades old (and newer too) software engineering practices have directly useful benefits to AI-assisted coding.

Your codebase, way more than the prompt that you use, way more than your AGENTS.md file, is the biggest influence on AI's output.

Use DDD's Bounded Context or A Philosophy of Software Design's Deep Modules to create clear boxes around functionality with interfaces on top.

Design your codebase for progressive disclosure of complexity.

The Claude C Compiler: What It Reveals About the Future of Software

https://www.modular.com/blog/the-claude-c-compiler-what-it-reveals-about-the-future-of-softwareCCC shows that AI systems can internalize the textbook knowledge of a field and apply it coherently at scale. AI can now reliably operate within established engineering practice. This is a genuine milestone that removes much of the drudgery of repetition and allows engineers to start closer to the state of the art.

Right now, where LLM coding agents can do there best work is in codebase where a solid foundation, clear conventions, and robust abstractions and patterns have all been well-established.

As writing code is becoming easier, designing software becomes more important than ever. As custom software becomes cheaper to create, the real challenge becomes choosing the right problems and managing the resulting complexity. I also see big open questions about who is going to maintain all this software.

Managing the complexity of large software systems has always been what makes software engineering challenging. While LLMs handle the “drudgery” of actually writing the code, are they simultaneously exacerbating the challenge of managing the complexity? If so, how do we, as “conductors” of coding agents, reign in that expanding complexity?

microgpt

http://karpathy.github.io/2026/02/12/microgpt/Andrej Karpathy’s latest blog post (he doesn’t post very often, so you wanna check it out when he does) presents an implementation of a GPT in 200 lines of python with no dependencies.

This file contains the full algorithmic content of what is needed: dataset of documents, tokenizer, autograd engine, a GPT-2-like neural network architecture, the Adam optimizer, training loop, and inference loop. Everything else is just efficiency.

Context Synergy

The idea of context synergy is that I want to produce high-context notes that can be leveraged in multiple ways. For instance, if I'm typing notes into logseq detailing a TODO for my current feature, I've been working to shift my phrasing to directly actionable notes instead of low-fidelity placeholder. I want that TODO from my notes to be something I can directly paste to an LLM. When I start writing my notes in this way, I find myself writing in an active voice. Those items can make there way into PR descriptions and project management issue descriptions.

Notes written in this way are useful to me, to an LLM, and to team members and stakeholders who are reading my issues and PRs.

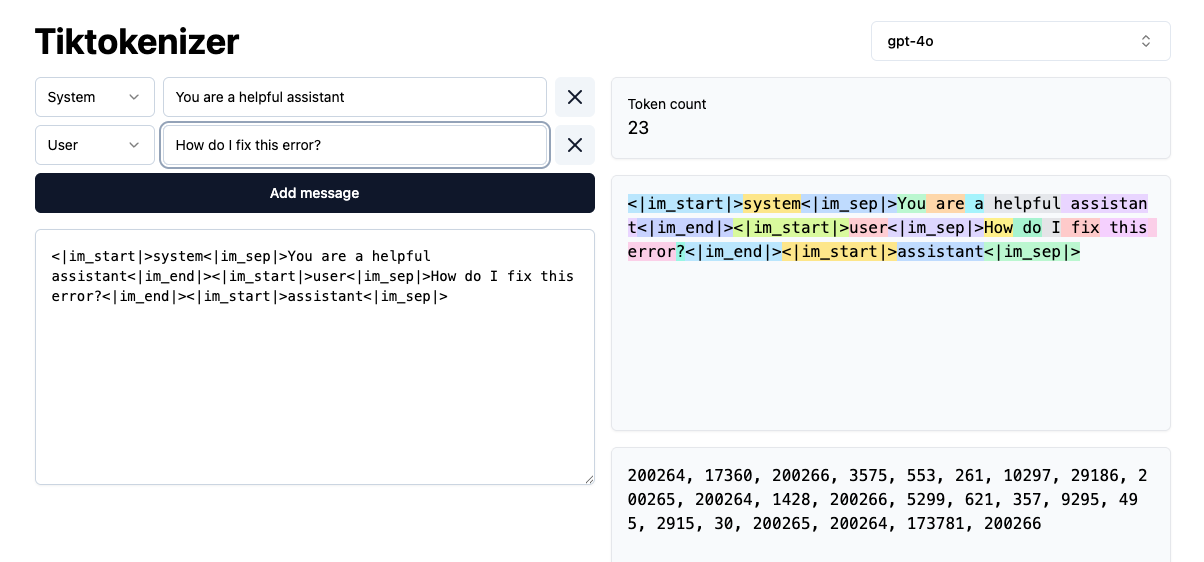

Tiktokenizer: visualize LLM prompt tokenization

https://tiktokenizer.vercel.app/Using OpenAI's BPE tokenizer (tiktoken), this app shows a visualization of how different models will tokenize prompts (system and user input). If you've wondered what it means that a model has a 100k token context window, these are the tokens that are being counted.

They vary from model to model because each model is using different vocabulary sizes as they try to optimize for their training corpus and overall inference capabilities. A smaller vocab size means smaller tokens which can be limiting for how "smart" the model can be. Larger vocab sizes can be an unlock for inference, but comes with a higher training cost and perhaps slower or more computationally expensive generation.

Agentic anxiety

https://jerodsanto.net/2026/02/agentic-anxiety/Something’s different this time, and I can say confidently this is the most unsure I’ve ever been about software’s future.

As Jerod puts it. It's not FOMO (fear of missing out) so much as it is FOBLB (fear of being left behind).

One fascinating part of our conversation with Steve Ruiz from tldraw started when he confessed that he feels bad going to bed without his Claudes working on something.

I think part of what is behind this is the same thing discussed in the HBR article, AI Doesn't Reduce Work--It Intensifies It, which points to this feeling that if we can do more we should be doing more.

AI Doesn’t Reduce Work—It Intensifies It

https://hbr.org/2026/02/ai-doesnt-reduce-work-it-intensifies-itThis 8-month study of 200 employees found that these employees used AI tools to work at a faster pace and take on a broader scope of work.

While this may sound like a dream come true for leaders, the changes brought about by enthusiastic AI adoption can be unsustainable, causing problems down the line. Once the excitement of experimenting fades, workers can find that their workload has quietly grown and feel stretched from juggling everything that’s suddenly on their plate. That workload creep can in turn lead to cognitive fatigue, burnout, and weakened decision-making.

Crucially,

The productivity surge enjoyed at the beginning can give way to lower quality work, turnover, and other problems.

This all rings pretty true to my own experience. I am definitely finding that I'm able to get more done with these tools, but that is, in part, because I've convinced myself that I can take on extra work because of the upfront time savings.

It is an ongoing challenge to explore how to balance all of it.

The findings from this study seem consistent with what happened with factory automation in the 20th century. Instead of reducing the workload, automation intensified the work, made it more repetitive, and increased the chance of injury. The physical stakes of AI's introduction to knowledge work is much different, but the parallels are there.

The Illustrated Word2vec

https://jalammar.github.io/illustrated-word2vec/Two central ideas behind word2vec are skipgram and negative sampling -- SGNS (Skipgram with Negative Sampling).

We start with random vectors for the embeddings and then we cycle through a bunch of training steps where we compute error values for the results of each step to use as feedback to update the model parameters for all involved embeddings. This process nudges the vectors of words toward or away from others based on their similarity via the error values.

Random notes from the post:

"Continuous Bag of Words" architecture can train against text by using a sliding window that both looks some number of words back and some number of words forward in order to predict the current word. It is described in this Word2Vec paper.

Skipgram architecture is a method of training where instead of using the surrounding words as context, you look at the current word and try to guess the words around it.

In training, you go step by step looking at the mostly likely predicted word produced by your model and then producing an error vector based on what words it should have ranked higher in its prediction. That error vector is then applied to the model to improve its subsequent predictions.

Cosine Similarity is a way to measure how similar two vectors are. For 2D vectors, this would be trivially measured by computing the distance between the two points. However, vectors can be many dimensions. "The good thing is, though, that cosine_similarity still works. It works with any number of dimensions."

Build a Large Language Model (From Scratch) - Sebastian Raschka

https://www.manning.com/books/build-a-large-language-model-from-scratchA lot of examples used when building up the concepts behind LLMs use vectors somewhere in the range of 2 to 10 dimensions. The dimensionality of real-world models is much higher:

The smallest GPT-2 models (117M and 125M parameters) use an embedding size of 768 dimensions... The largest GPT-3 model (175B parameters) uses an embedding size of 12,288 dimensions.

The Self-Attention Mechanism:

A key component of transformers and LLMs is the self-attention mechanism, which allows the model to weigh the importance of different words or tokens in a sequence relative to each other. This mechanism enables the model to capture long-range dependencies and contextual relationships within the input data, enhancing its ability to generate coherent and contextually relevant output.

Variants of the transformer architecture:

- BERT (Bidirectional Encoder Representations from Transformers) - "designed to pre-train deep bidirectional representations from unlabeled text by jointly conditioning on both left and right context in all layers."

- GPT (Generative Pretrained Transformers) - "primarily designed and trained to perform text completion tasks."

BERT receives input where some words are missing and then attempts to predict the most likely word to fill each of those blanks. The original text can then be used to provide feedback to the model's predictions during training.

GPT models are pretrained using self-supervised learning on next-word prediction tasks.

Foundation models are called that because the are generalized with their pretraining and can then be fine-tuned afterward to specific tasks.

The Unreasonable Effectiveness of Recurrent Neural Networks

https://karpathy.github.io/2015/05/21/rnn-effectiveness/I recently started reading How to Build a Large Language Model (from scratch). Early in the introduction of the book they mentioned that the breakthroughs behind the current surge in LLMs is relatively recent. A 2017 paper (Attention Is All You Need) from various people including several at Google Brain introduced major improvements over RNNs (Recurrent Neural Networks) and LSTMs (Long Short-Term Memory Networks). The paper introduced the Transformer architecture which uses self-attention which enables two important things. First, it allows for better recall of long-range dependencies as input is processed (which deals with the vanishing gradient problem). Second, it overcomes the sequential processing limitation of RNNs allowing parallelizable processing of input.

Anyway, all of this had me feeling like it would be nice to know a bit more about Recurrent Neural Networks. A top search result I turned up was this Andrej Karpathy blog post from 2015 (two years before the aforementioned Transformer paper). It goes into a good amount of detail about how RNNs, specifically LSTMs, work and has nice diagrams. You might need to brush up on your linear algebra to appreciate the whole thing.

Your job is to deliver code you have proven to work

https://simonwillison.net/2025/Dec/18/code-proven-to-work/To master these tools you need to learn how to get them to prove their changes work as well.

I’ve been using Claude Code to automate a series of dependency upgrades on a side project. I was surprised by the number of times it has confidently told me it fixed such-and-such issue and that it can now see that it works, when in fact it doesn’t work. I had to give it additional tests and tooling to verify the changes it was making had the intended effect.

My surprise at this was because the previous several upgrade steps it would deftly accomplish.

Almost anyone can prompt an LLM to generate a thousand-line patch and submit it for code review. That’s no longer valuable. What’s valuable is contributing code that is proven to work.

The step beyond this that I also view as table stakes is ensuring the code meets team standards, follows existing patterns and conventions, and doesn’t unreasonably accrue technical debt.

How to write a great agents.md: Lessons from over 2,500 repositories

https://github.blog/ai-and-ml/github-copilot/how-to-write-a-great-agents-md-lessons-from-over-2500-repositories/Put commands early: Put relevant executable commands in an early section: npm test, npm run build, pytest -v. Include flags and options, not just tool names. Your agent will reference these often.

I’ve seen big improvements in how agents do with my codebase when I include exactly what command and flags should be used for things like running the test suite.

Cover six core areas: Hitting these areas puts you in the top tier: commands, testing, project structure, code style, git workflow, and boundaries.

Covering these areas is a good starting point. I’m usually not sure where to start and only add things as needed.

Security beyond the model: Introducing AI system cards

https://www.redhat.com/en/blog/security-beyond-model-introducing-ai-system-cardsIn practice, end users engage with systems, not raw models, which is why a single foundational model can power hundreds of tailored solutions across domains. Without the surrounding infrastructure of an AI system, even the most advanced model remains untapped potential rather than a tool that solves real‑world problems.

System Prompts - Claude Docs

https://platform.claude.com/docs/en/release-notes/system-promptsSimon Willison shared this addition to Claude’s system prompts:

If the person is unnecessarily rude, mean, or insulting to Claude, Claude doesn't need to apologize and can insist on kindness and dignity from the person it’s talking with. Even if someone is frustrated or unhappy, Claude is deserving of respectful engagement.

It’s very interesting to read through the full prompts to see all the different kinds of instruction they start with.

Anthropic CEO: AI Will Be Writing 90% of Code in 3 to 6 Months

https://www.businessinsider.com/anthropic-ceo-ai-90-percent-code-3-to-6-months-2025-3Around March 14th, 2025:

"I think we will be there in three to six months, where AI is writing 90% of the code. And then, in 12 months, we may be in a world where AI is writing essentially all of the code," Amodei said at a Council of Foreign Relations event on Monday.

Amodei said software developers would still have a role to play in the near term. This is because humans will have to feed the AI models with design features and conditions, he said.

Via this Armin Ronacher article, via this David Crespo Bluesky post.

What Happened When I Tried to Replace Myself with ChatGPT in My English Classroom

https://lithub.com/what-happened-when-i-tried-to-replace-myself-with-chatgpt-in-my-english-classroom/Like many teachers at every level of education, I have spent the past two years trying to wrap my head around the question of generative AI in my English classroom. To my thinking, this is a question that ought to concern all people who like to read and write, not just teachers and their students.

Each student submitted a draft of writing on a common subject and when the teacher presented all the titles in aggregate, you could see a plagiaristic convergence.

I expected them to laugh, but they sat in silence. When they did finally speak, I am happy to say that it bothered them. They didn’t like hearing how their AI-generated submissions, in which they’d clearly felt some personal stake, amounted to a big bowl of bland, flavorless word salad.

Via conputer dipshit

How I keep up with AI progress

http://blog.nilenso.com/blog/2025/06/23/how-i-keep-up-with-ai-progress/To help with this, I’ve curated a list of sources that make up an information pipeline that I consider balanced and healthy. If you’re late to the game, consider this a good starting point.

Their top recommendation of what to follow if you were to only follow one thing is the same as mine — Simon Willison’s blog.

But don’t stop there, this article is jam packed with links to other people and resources in the AI and LLM space.

Adding a feature because ChatGPT incorrectly thinks it exists

https://www.holovaty.com/writing/chatgpt-fake-feature/This developer noticed a bunch of people were being referred to their product by ChatGPT for a feature that doesn’t exist. They decided to meet the demand and build the feature.

Hallucination Driven Development!

Author also highlights the challenge of LLM hallucinations creating false expectations and disappointment about a product.

Context engineering

https://simonwillison.net/2025/Jun/27/context-engineering/As Simon argues here, “prompt engineering” as a term didn’t really catch on. Perhaps “context engineering” better captures the task of providing just the right context to an LLM to get it to most effectively solve a problem.

It turns out that inferred definitions are the ones that stick. I think the inferred definition of "context engineering" is likely to be much closer to the intended meaning.

AI-assisted Coding and the Jevons Paradox

https://lobste.rs/s/42qb2p/i_am_disappointed_ai_discourse#c_6sp5oiSimon Willison on whether LLMs are going to replace software developers and what these tools mean for our careers:

I do think that AI-assisted development will drop the cost of producing custom software - because smaller teams will be able to achieve more than they could before. My strong intuition is that this will result in massively more demand for custom software - companies that never would have considered going custom in the past will now be able to afford exactly the software they need, and will hire people to build that for them. Pretty much the Jevons paradox applied to software development.

I am disappointed in the AI discourse

https://steveklabnik.com/writing/i-am-disappointed-in-the-ai-discourse/It’s more that both the pro-AI and anti-AI sides are loudly proclaiming things that are pretty trivially verifiable as not true. On the anti side, you have things like the above. And on the pro side, you have people talking about how no human is going to be programming in 2026. Both of these things fail a very basic test of [is it true?].

turbopuffer

https://turbopuffer.com/docsturbopuffer is a fast search engine that combines vector and full-text search using object storage, making all your data easily searchable.

Turbopuffer is used by Cursor, Linear, Notion, and others to do improved search across large document sets by combining vector and FTS.

I learned about it from this article on how Cursor uses Merkle Trees for Indexing Your Codebase.

What is a tool?

https://tante.cc/2025/04/27/are-ai-system-really-tools/This is a fun thought experiment. What is a tool? What distinguishes a tool from any object used in a make-shift way in place of a specific tool?

The author is making the argument that AI systems are not tools. I don’t find the argument compelling, but I do still think it opens up an interesting discussion. Here is the crux of their argument:

Tools are not just “things you can use in a way”, they are objects that have been designed with great intent for a set of specific problems, objects that through their design make their intended usage obvious and clear (specialized tools might require you to have a set of domain knowledge to have that clarity). In a way tools are a way to transfer knowledge: Knowledge about the problem and the solutions are embedded in the tool through the design of it.

Simon Willison has a good comment in the lobsters thread:

An LLM is a tool for turning input text that contains instructions into output text that follows those instructions.

If a laptop computer or a blank piece of paper is a tool, then an LLM is a tool. All three have an incredibly wide range of potential uses, many of which were never dreamed of by their creators.

If we aren’t allowed to call those “tools” then I guess we need a new word that describes them.

Via: lobsters

Vibe Coding the Right Way

https://wycats.substack.com/p/vibe-coding-the-right-wayTL;DR I use coding assistants to build out a piece of code. Once I feel ok with the initial output, I switch back into normal coding mode. I refactor the code, carefully document it and introduce abstractions that capture common patterns. I also write good tests for the code. For testing, I use my “normal coding brain” to come up with the test cases but lean on AI code accelerators to speed up the process.

I like this term AI code accelerators. It suggests that it helps do what you’d already decided to do. It helps with the boilerplate or repetition for instance.

I’m seeing more and more of this genre of article where very experienced software engineer describes their AI coding workflow.

Weaving Systems Together

http://geoffreylitt.com/2025/04/12/how-i-made-a-useful-ai-assistant-with-one-sqlite-table-and-a-handful-of-cron-jobs.htmlWhat I find fascinating by this article is less the idea of an “AI Assistant” and more the way a handful of tools can be weaved together into a useful personal tool.

Val.town acts as the glue for making requests to Claude and a SQLite database. It uses telegram as the UI where messages can be sent and received. It also connects to other systems like google calendar as well as being able to receive inbound emails.

LLM as contextual thesaurus for writing

https://bsky.app/profile/justinjackson.ca/post/3lmdjuld6lk2fJustin describes a use case for LLMs in writing, where he asked for the difference between "consumed" and "subsumed" and which would make more sense in what he is trying to say. The LLM gives definitions of both and suggests that "subsumed" is a better fit citing specifically why in the context of what is written.

I've done this and a similar thing where I'm trying to remember a word, it is on the tip of my tongue, so I describe what I'm trying to say and the LLM makes some suggestions. This can also be generally done for terms and phrases where you know the general idea but aren't sure what the technical term is.

Here is an example of this that I just did with Claude:

Me: "What do you call the kind of cognitive bias where you have already made up your mind, so as you engage in a shallow investigation, you key in to supporting evidence and ignore things that contradict your opinion?"

Claude:

This is known as confirmation bias. It's the tendency to search for, interpret, favor, and recall information in a way that confirms or supports your pre-existing beliefs or values, while giving less consideration to alternative possibilities or contradictory evidence.

When experiencing confirmation bias, people tend to:

- Selectively notice and emphasize evidence that supports their existing belief

- Discount or ignore evidence that contradicts their view

- Interpret ambiguous information in ways that reinforce their position

- Seek out sources that align with their perspective

It's one of the most well-documented cognitive biases and affects people across many domains, including politics, personal relationships, scientific research, and everyday decision-making. Confirmation bias can be particularly problematic because it creates a self-reinforcing cycle that makes it difficult to change one's mind even when presented with new, relevant information.

Horseless intelligence

https://nedbatchelder.com/blog/202503/horseless_intelligence.htmlMy advice about using AI is simple: use AI as an assistant, not an expert, and use it judiciously. Some people will object, “but AI can be wrong!” Yes, and so can the internet in general, but no one now recommends avoiding online resources because they can be wrong. They recommend taking it all with a grain of salt and being careful. That’s what you should do with AI help as well.

Skeptics of the uses of LLMs typically point to strawman arguments and gotchas to wholesale discredit LLMs*. They're either clinging too tightly to their bias against these tools or completely missing the point. These tools are immensely useful. They aren't magic boxes though, despite what the hypemen might want you to believe. If you take a little extra effort to use them well, they are a versatile swiss army knife to add to your software tool belt."Use [these LLMs] as an assistant, not an expert."

*There are soooo many criticisms and concerns we can and should raise about LLMs. Let's have those conversations. But if all you're doing is saying, "Look, I asked it an obvious question that I know the answer to and it got it WRONG! Ha, see, this AI stuff is a joke." The only thing you're adding to the dialogue is a concern for your critical thinking skills.

If you approach AI thinking that it will hallucinate and be wrong, and then discard it as soon as it does, you are falling victim to confirmation bias. Yes, AI will be wrong sometimes. That doesn’t mean it is useless. It means you have to use it carefully.

Use it carefully, and use it for what it is good at.

For instance, it can scaffold a 98% solution to a bash script that does something really useful for me. Something that I might have taken an hour to write myself or something I would have spent just as much time doing manually.

Another instance, I'm about to write a couple lines of code to do X. I've done something like X before, I have an idea of how to approach it. A habit I've developed that enriches my programming life is to prompt Claude or Cursor for a couple approaches to X. I see how those compare to what I was about to do.

There are typically a few valuable things I get from doing this:

- The effort of putting thoughts into words to make the prompt clarifies my thinking about the problem itself. Maybe I notice an aspect of it I hadn't before. Maybe I have a few sentences that I can repurpose as part of a PR description later.

- The LLM suggests approaches I hadn't considered. Maybe it suggests a command, function, or language feature I don't know much about. I go look those things up and learn something I wouldn't have otherwise encountered.

- The LLM often draws my attention to edge cases and other considerations I hadn't thought of when initially thinking through a solution. This leads me to develop more complete and robust solutions.

I’ve used AI to help me write code when I didn’t know how to get started because it needed more research than I could afford at the moment. The AI didn’t produce finished code, but it got me going in the right direction, and iterating with it got me to working code.

Sometimes you're staring at a blank page. The LLM can be the first one to toss out an idea to get things moving. It can get you to that first prototype that you throw away once you've wrapped your head around the problem space.

Your workflow probably has steps where AI can help you. It’s not a magic bullet, it’s a tool that you have to learn how to use.

This reiterates points I made above. Approach LLMs with an open and critical mind, give it a chance to see where they can fit into your workflow.

Claude Code - Anthropic

https://docs.anthropic.com/en/docs/agents-and-tools/claude-code/overviewI'm giving Claude Code a try. It is a terminal-based LLM agent that can iterate on software tasks with a human in the loop to prompt a task description, confirm or abort individual actions, and guide the process.

Claude Code does usage-based metering. As I'm currently looking at the "Buy Credits" page, there is an initial billing limit of $100 per month:

All new accounts have a monthly limit of $100 credits/month. This limit increases with usage over time.

After purchasing credits, I'm presented with a hero section large font that says:

Build something great

Once logged in to Claude Code in the terminal, I am first shown the following security notes:

Security notes:

1. Claude Code is currently in research preview

This beta version may have limitations or unexpected behaviors.

Run /bug at any time to report issues.

2. Claude can make mistakes

You should always review Claude's responses, especially when

running code.

3. Due to prompt injection risks, only use it with code you trust

For more details see:

https://docs.anthropic.com/s/claude-code-security

The 70% problem: Hard truths about AI-assisted coding

https://addyo.substack.com/p/the-70-problem-hard-truths-aboutLLMs are no substitute for the hard-won expertise of years of building software, working within software teams, and evolving systems. You can squeeze the most out of iterations with a coding LLM by bringing that experience to every step of the conversation.

In other words, they're applying years of hard-won engineering wisdom to shape and constrain the AI's output. The AI is accelerating their implementation, but their expertise is what keeps the code maintainable.

The 70% problem

A tweet that recently caught my eye perfectly captures what I've been observing in the field: Non-engineers using AI for coding find themselves hitting a frustrating wall. They can get 70% of the way there surprisingly quickly, but that final 30% becomes an exercise in diminishing returns.

Addy goes on to describe this "two steps back pattern" where a developer using an LLM encounters an error, they ask the LLM to suggest a fix, the fix sorta works but two other issues crop up, and repeat.

This cycle is particularly painful for non-engineers because they lack the mental models to understand what's actually going wrong. When an experienced developer encounters a bug, they can reason about potential causes and solutions based on years of pattern recognition.

Beyond having the general programming and debugging experience to expedite this cycle, there is also an LLM intuition to be developed. I remember John Lindquist describing that he notices certain "smells" when working with LLMs. For instance, often when you're a couple steps into a debugging cycle with an LLM and it starts wanting to go make changes to config files, that is a smell. It's a "smell" because it should catch your attention and scrutiny. A lot of times this means the LLM is way off course and it is now throwing generative spaghetti at the wall. I learned two useful things from John through this:

- You have to spend a lot of time using different models and LLM tools to build up your intuition for these "smells".

- When you notice one of these smells, it's likely that the LLM doesn't have enough or the right context. Abort the conversation, refine the context and prompt, and try again. Or feed what you've tried into another model (perhaps a more powerful reasoning one) and see where that gets you.

Being able to do any of that generally hinges on having already spent many, many years debugging software and having already developed some intuitions for what is a good next step and what is likely heading toward a dead end.

These LLM tools have shown to be super impressive at specific tasks, so it is tempting to generalize their utility to all of software engineering. However, at least for now, we should recognize the specific things they are good at and use them for that:

This "70% problem" suggests that current AI coding tools are best viewed as:

- Prototyping accelerators for experienced developers

- Learning aids for those committed to understanding development

- MVP generators for validating ideas quickly

I'd at to this list:

- Apply context-aware boilerplate autocomplete — establish a pattern in a file/codebase or rely on existing library conventions and a tool like Cursor will often suggest an autocompletion that saves a bunch of tedious typing.

- Scaffold narrow feature slices in a high-convention framework or library — Rails codebases are a great example of this where the ecosystem has developed strong conventions that span files and directories. The LLM can generate 90% of what is needed, following those conventions. By providing specific rules about how you develop in that ecosystem and a tightly defined feature prompt, the LLM will produce a small diff of changes that you can quickly assess and test for correctness. To me this is distinct from the prototyping item suggested by Addy because it is a pattern for working in an existing codebase.

Now you don’t even need code to be a programmer. But you do still need expertise

https://www.theguardian.com/technology/2025/mar/16/ai-software-coding-programmer-expertise-jobs-threatThis quote about Simon is spot on and it is why I recommend his blog whenever I talk to another developer who is worried about LLM/AI advancement.

A leading light in this area is Simon Willison, an uber-geek who has been thinking and experimenting with LLMs ever since their appearance, and has become an indispensable guide for informed analysis of the technology. He has been working with AI co-pilots for ever, and his website is a mine of insights on what he has learned on the way. His detailed guide to how he uses LLMs to help him write code should be required reading for anyone seeking to use the technology as a way of augmenting their own capabilities. And he regularly comes up with fresh perspectives on some of the tired tropes that litter the discourse about AI at the moment.

It is tough to wade through both the hype and the doom while trying to keep tabs on "the latest in AI". Simon has an excitement for this stuff, but it is always balanced, realistic, and thoughtful.

The author then goes on to quote Tim O'Reilly on the subject of "what does this mean for programming jobs?"

As Tim O’Reilly, the veteran observer of the technology industry, puts it, AI will not replace programmers, but it will transform their jobs.

Which compliments the sentiment from Laurie Voss' latest post AI's effects on programming jobs which expects we will see a lot more programming jobs in the wake of an LLM transformation of the industry.

And as my friend Eric suggested, the Jevons Paradox may come in to play where programmers are the "resource" being more efficiently consumed which will, paradoxically, increase the demand for programmers.

AI's effects on programming jobs

https://seldo.com/posts/ai-effect-on-programming-jobsI would like to advance a third option, which is that AI will create many, many more programmers, and new programming jobs will look different.

What do we call the emerging type of programming job where a person is instructing or orchestrating AIs and LLMs to do work while not necessarily knowing the lower level details (code)?

Including "AI" in the name feels wrong though, it's got a horseless carriage feel. All programming will involve AI, so including it in the name will be redundant.

The statement “All programming will include AI.” caught me attention. It seems like an optional, even niche tool at the moment. The prediction here being that it will become ubiquitous, perhaps to the same degree as using an IDE or to using auto code formatters.

I find myself and lots of others pondering on the impact of LLMs on software development to want to generalize to one big brush stroke, but I think the reality is going to be closer to what is described here.

I think we will see all three at the same time. Some AI-assisted software development will raise the bar for quality to previously cost-ineffective heights. Some AI-driven software will be the bare minimum, put together by people who could never have successfully written software before. And a great deal will be software of roughly the quality we see already, produced in less time, and in correspondingly greater quantity.

The general sentiment of this post is that there will be more jobs, not fewer. And that the impact on salaries (existing ones at least) won’t be much.

Some words of caution though:

But the adjustment won't be without pain: some shitty software will get shipped before we figure out how to put guardrails around AI-driven development. Some programmers who are currently shipping mediocre software will find themselves replaced by newer, faster, AI-assisted developers before they manage to learn AI tools themselves. Everyone will have a lot of learning to do. But what else is new? Software development has always evolved rapidly. Embrace change, and you'll be fine.

Via Seldo on Bluesky

LLMs amplify existing technical decisions

https://bsky.app/profile/nateberkopec.bsky.social/post/3lkj4kp53gt2pIf you’ve made sustainable decisions and developed good patterns, LLMs can amplify those. If you’ve made poor technical decisions, LLMs will propagate that technical debt.

Technical debt accumulates when people just "glob on" one more thing to an existing bad technical decision.

We chicken out and ship 1 story point, not the 10 it would take to tidy up.

LLMs encourage this even more. See thing, make more of thing. Early choices get copied.

Here’s how I use LLMs to help me write code

https://simonwillison.net/2025/Mar/11/using-llms-for-code/There are a bunch of great tips in here for getting better use out of LLMs for code generation and debugging.

The best way to learn LLMs is to play with them. Throwing absurd ideas at them and vibe-coding until they almost sort-of work is a genuinely useful way to accelerate the rate at which you build intuition for what works and what doesn't.

LLMs are no replacement for human intuition and experience. I've spent enough time with GitHub Actions that I know what kind of things to look for, and in this case it was faster for me to step in and finish the project rather than keep on trying to get there with prompts.

GitHub - yamadashy/repomix: 📦 Repomix

https://github.com/yamadashy/repomix📦 Repomix (formerly Repopack) is a powerful tool that packs your entire repository into a single, AI-friendly file. Perfect for when you need to feed your codebase to Large Language Models (LLMs) or other AI tools like Claude, ChatGPT, DeepSeek, Perplexity, Gemini, Gemma, Llama, Grok, and more.

I used this for the first time to quickly bundle up a Python program into a single file that I could hand to Claude for help with a setup issue.

Assuming I'm already in the directory of the project, I can run:

$ npx repomix

I've been experimenting with mise lately for managing tool versions like node, so I'll use that.

Here I ask mise to run npx repomix in the context of Node.js v23:

$ mise exec node@23 -- npx repomix

It spit out a file called repomix-output.txt.

I wanted to drag that file from Finder into the Claude app, so I then ran:

$ open . -a Finder.app

Claude 3.7 Sonnet and Claude Code \ Anthropic

https://www.anthropic.com/news/claude-3-7-sonnetAn AI coding tool that I use directly from the terminal?! 👀

Claude Code is available as a limited research preview, and enables developers to delegate substantial engineering tasks to Claude directly from their terminal.

"thinking tokens"? Does that mean the input and output tokens that are used as part of intermediate, step-by-step "reasoning"?

In both standard and extended thinking modes, Claude 3.7 Sonnet has the same price as its predecessors: $3 per million input tokens and $15 per million output tokens—which includes thinking tokens.

The Claude Code Overview shows how to get started installing and using Claude Code in the terminal.

They all use it, by Thorsten Ball

https://registerspill.thorstenball.com/p/they-all-use-itMostly online, but in an occasional real-world conversation someone will be expressing their disinterest and dissatisfaction with LLMs in the realm of software development and they'll say, "I tried it and it just made stuff up. I don't trust it. It will take me less time to build it myself than fix all its mistakes."

My immediate follow-up question is usually "what model / LLM tool did you use and when?" because the answer is often GitHub copilot or some free-tier model from years ago.

But what I want to do is step back here like Thorsten and ask, "Aren't you curious? Don't you want to know how these tools fit into what we do and how they might start to reshape our work?"

What I don’t get it is how you can be a programmer in the year twenty twenty-four and not be the tiniest bit curious about a technology that’s said to be fundamentally changing how we’ll program in the future. Absolutely, yes, that claim sounds ridiculous — but don’t you want to see for yourself?

The job requires constant curiosity, relearning, trying new techniques, adjusting mental models, and so on.

What I’m saying is that ever since I got into programming I’ve assumed that one shared trait between programmers was curiosity, a willingness to learn, and that our maxim is that we can’t ever stop learning, because what we’re doing is constantly changing beneath our fingers and if we don’t pay attention it might slip aways from us, leaving us with knowledge that’s no longer useful.

I suspect much of the disinterest is a reaction to the (toxic) hype around all things AI. There is too much to learn and try for me to let the grifters dissuade me from the entire umbrella of AI and LLMs. I make an effort to try out models from all the major companies in the space, to see how they can integrate into the work I do, how things like Cursor can augment my day-to-day, discussing with others what workflows, techniques, prompts, strategies, etc. can lead to better, exciting, interesting, mind-blowing results.

I certainly don't think the writing is on the wall for all this GenAI stuff, but it feels oddly incurious if not negligent to simply write it off.

MarkDownload: browser extension to clip websites and download them into a readable markdown file

https://github.com/deathau/markdownloadI learned about this one from Taylor Bell. It's a browser (Chrome) extension that can grab the main content for a page as markdown which you can then download. The reason this is useful is because you can drag and drop a file like this into a tool like Claude, Cursor, ChatGPT, etc.

Let's say you are writing some code that uses a specific, niche library. Instead of relying on the LLM to know the ins and outs of the library and knowing about aspects of the latest version of that library, you can grab a markdown version of their latest docs and pass that in as context.

Based on the documentation and code examples in the given file, can you rework the previous script to make sure it is using the latest features and best practices of this library?

Or a similar thing I did recently was: after reading a blog post on a new-ish PostgreSQL feature, I grabbed the markdown for the blog post, and asked Claude for some examples of using the feature described in the blog post, but tailored to a situation I went on to describe.

When we can provide highly-specific, up-to-date context like this to an LLM, we are going to get much better results than if we toss up a one-sentence request.

I installed the extension directly from the Chrome Web Store, but I primarily linked to the GitHub project because that gives you the option to tweak and manually install the extension if that's preferred.

The Empty Promise of AI-Generated Creativity

https://hey.paris/posts/genai/This isn’t merely a technical limitation to be overcome with more data or better algorithms. It’s a fundamental issue: AI systems lack lived experience, cultural understanding, and authentic purpose—all essential elements of meaningful creative work. When humans craft stories, they draw upon personal struggles, cultural tensions, and genuine emotions. AI simply cannot access these wellsprings of authentic creation.

My LLM codegen workflow atm

https://harper.blog/2025/02/16/my-llm-codegen-workflow-atm/Flip the script by getting the Conversational LLM to ask you questions instead of you asking it questions.

Ask me one question at a time so we can develop a thorough, step-by-step

spec for this idea. Each question should build on my previous answers, and

our end goal is to have a detailed specification I can hand off to a

developer. Let’s do this iteratively and dig into every relevant detail.

Remember, only one question at a time.Here’s the idea:

...

This is a good way to hone an idea, rubberduck, and think through a problem space.

I tried a session of this with Claude Sonnet 3.7. It asked a lot of good questions and got me thinking. After maybe 8 or so questions I ran into the warning from Claude about the conversation getting too long and running into usage limits (not sure what to do about that speed bump yet).